First off, be sure to wait at least three and a half years between posts. It’s all about keeping your audience riveted in anticipation.

I spend a lot of my time making little cartographic pieces that don’t make it onto this blog. They’re just small, quick items that don’t really need a whole writeup, though I’ve previously collected some of them into “Odds and Ends” posts. Those entries, though, only cover a fraction of the cartographic ephemera I put together, most of which really has no permanent place to live on the Internet.

So, I’ve decided to combine a bunch of them into a book. Nearly forty little projects, all in a simple and mildly attractive PDF. I present to you: An Atlas of Minor Projects.

Grab your free copy and enjoy browsing through the various things that I make in my spare time!

While most of these projects were quick to make, they, together, represent quite a bit of effort. If you draw amusement, inspiration, or some other value from this collection, consider supporting my work. That can be as simple as just sharing this atlas with others! Or, if you have more to spare, you can use these donation links:

Hello.

This is an announcement.

Out of all the places I could have put an announcement, I will admit that it probably doesn't make the most logistical sense to put it here.

If I'd had the opportunity to put it somewhere that makes more sense for announcing things, such as Facebook or Twitter, I promise you I would have done that. However—and please don't become too distracted by this—but the vast majority of my social media accounts were hacked sometime back by an extremely persistent individual or entity whom I have thus far been unsuccessful at defeating, so I do not currently have anywhere else to put this.

Moving on.

As we discussed, there is an announcement. Soon it will be upon us. But first, a warm-up announcement:

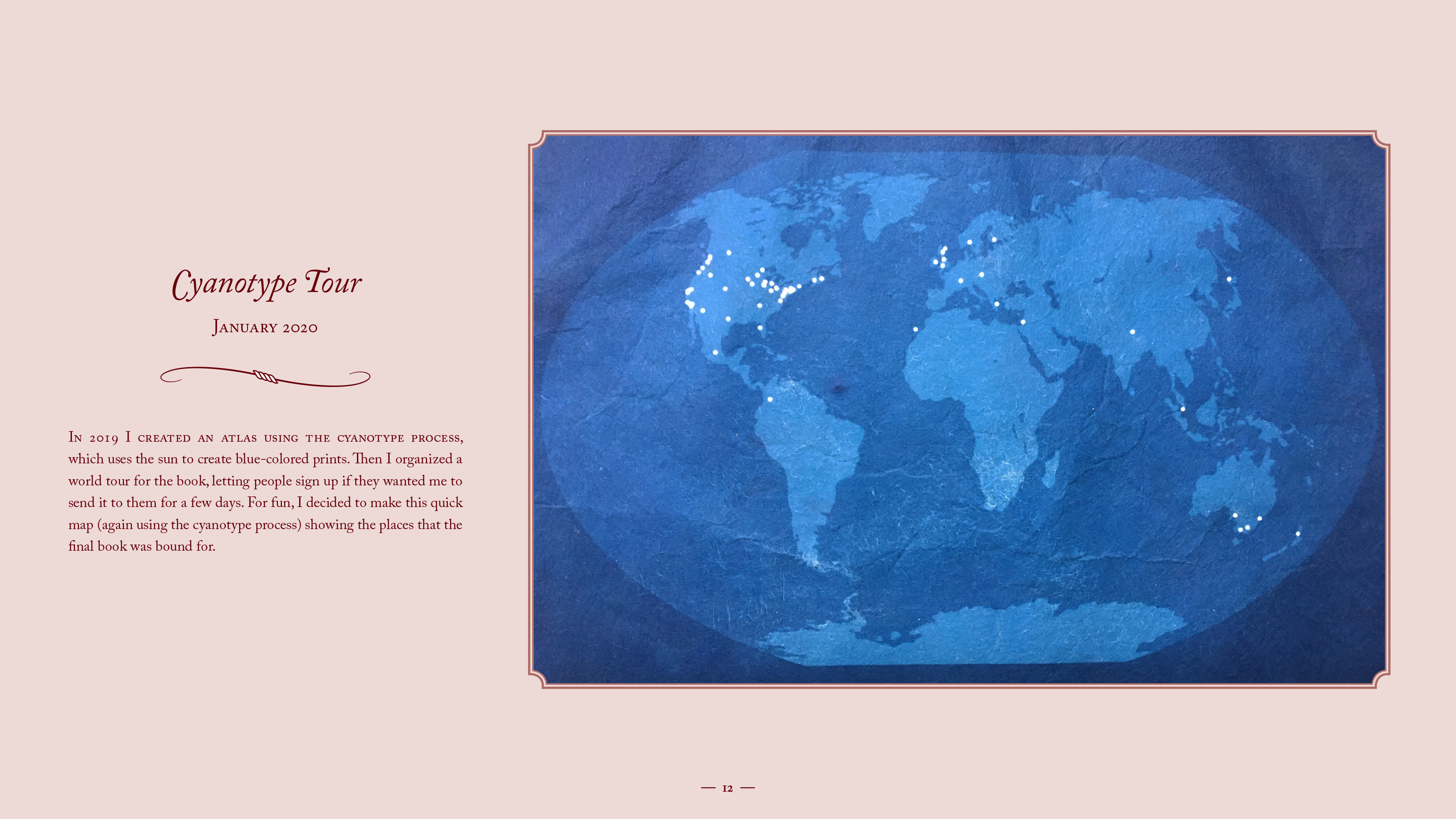

I told you this because I thought it might lend some credibility to the actual announcement, which is that I wrote a second book. For real this time. The book is finished. It has 518 pages. There is no going back at this point. There's a super official book page and everything.

As is tradition, a variety of ordering experiences are available.

For example, if you wish to be taken directly to the book page with no extra fanfare, please click the regular button:

If you wish to use a larger button to go directly to the book page, please click the big button:

If you wish to have a more difficult experience, the hard button is for you:

If you do not wish to interact with the book any further, please follow this button to safety:

If you want to feel slightly weirder than you currently do before visiting the book page, please click here:

If you wish for me to apologize for writing the book and/or for the bird collage, that option is also available:

If you just want to click a bunch of buttons, please go here:

---------------------------------------------------------------------------------

Okay. The announcement is complete. We may now proceed to the bonus phase.

Perhaps this event was not promotional enough for you. Perhaps you wish to be subjected to a truly unnecessary level of marketing both related and unrelated to my book. Perhaps you simply wish to experience the future in whatever form it takes. If this is the case, I have great news for you:

I recently learned how to use Instagram, and over the next several days, I intend to explore the limits of its potential, possibly even discovering new ways of using it. I will be relentless, and you will regret becoming involved, but you do have the opportunity to become involved if you wish. It's also completely possible that I decide against this and just post extreme close-ups of my belly button. Or something else could happen. One can never know these things.

Thank you for your time and patience. I hope you can find it in your hearts to still respect me after this.

-Allie

This is the story of how I came to realize that bigger and bigger boats is not a way to a meaningful life.

I once had a 13 feet long rowing boat Anna that I had converted to a cruiser by decking her. 1967 I sailed her on the Swedish west coast and in Limfjord Denmark. 1968 I sailed her to England via Denmark, Germany, Holland and Belgium. In most of the fifty harbors I visited the grown ups had advised me to get a bigger boat. It would be safer, faster and make me more happy they all said.

Obediently I went back to Sweden were I found the hull of a 40’ steamboat. She was made of iron 1885. I named her Duga and converted her to a staysail schooner and sailed her to Rio Brazil. On the way in Las Palmas, Canary Islands, a 72 feet Camper and Nicholson Ketch dropped her anchor next to Duga.

She flew a Norwegian flag. It was father and son, his wife and baby. They were on their way to the Caribbean to do charter and wanted the boat to be shiny. I was asked to do the masts. First they had to be scraped, then varnished seven times, a big job that would take plenty of time. As a bonus I was invited to have all my meals onboard. After a few weeks on board the huge luxuries ketch I found her size to be just right. In the evening after the days work was done it felt embarrassing and unfair that I had to row back to my much smaller Duga.

In less than a year my comfort zone had grown from a 13 foot converted rowing boat to a 72 feet ketch.

During the long sail to Rio I realized that I was trapped in the hedonic treadmill. It was a sobering lesson. The grown ups had promised me that I would be more happy in a bigger boat. It was true initially but not in the long term. I had been fine in my 13 feet Anna. After Rio I sold Duga and built Bris a small boat that I sailed happily in for many years.

Most people do not realize that they adapt to the size of their boats so they want bigger and bigger all the time. Science has found out about that and given it the name hedonic treadmill.

It is a consequence of hedonic adaptation. When your boss gives you a raise you will initially be happier. After a time you habituate to the larger salary and return your happiness set point. Even if you win a million on the lottery the same thing happens, first you get happy, then you return to your happiness set point. Luckily this also goes for bad luck. You lose your job and your house burns a thing that happens to many in war but after some time you return to your happiness set point.

In our consumer society most persons waste their life upgrading their possessions to be in the false belief that they will get happier.

2011 in Porto Santo Madeira I witnessed an example of how a family had wasted their life’s saving in the false believe that they get more happy in a big expensive boat. They planned to sail around the world and wanted a new boat from a yard with good reputation.

They had ordered a 36 feet long boat. They had to wait 2 or 3 years for delivery. After a year or so the salesman told them that now there was a new model and that that model would have a better second hand value. The new model was more expensive but the family did not like to louse money so the upgraded. Then the salesman told them that there was problems with fresh water in the Pacific and that they better get a water maker. The water maker was expensive but they liked to have plenty of water.

Then the salesman told them that it would be stupid to run the main engine just for the water maker. He told them they better get a gen set.

At this point the family was about to run out of money so the said that they had to wait and see.

The salesman then told them that it would be far more expensive to install the gen set after the boat was built. The family got the gen set too.

Now the boat was so expensive that they had to sell their house. Not only that they also decided to work an extra year to better their economy, losing a year of cruising time.

Now the boat was so expensive and contained most of their life savings so they decided they better get an insurance.

Now the insurance company said that once they got into the Pacific the insurance would be more expensive because it was so dangerous to sail there. That drained their economy even more.

Also as the boat was so expensive they had to worry about her and the wife told the husband that he had to clean and polish the boat very often so that it did not depreciate in money and the wife had to worry about the inside was shiny and they did not have time to be happy.

I was feeling very sorry for the family. There I was at the same dock, enjoying the same landscape, paying less harbor due as my boat was smaller. I had no expensive insurance and I did not have to polish my boat. I had an oar instead of an engine. I did not have to change oil on my oar nor change any fuel filters on it and so on. I could spend my time enjoying myself.

Even sadder this story is not unique, there are thousands of families like that.

The solution is to have simple habits. With simple habits you can live on a small boat have a meaningful and not worry about economy and other boring things.

It is not easy to live a meaningful life in this world full of salesmen and plenty of advertisement but a simple habits is a good start.

I got my introduction to simple habits in an unlikely place.

The spring of 1962 I was living with eastern orthodox monks on the Mount Athos peninsula in Greece.

The Autonomous Monastic State of the Holy Mountain is a region in northern Greece. There are twenty monasteries and different villages and houses that depend on them. Around 2000 monks live there in seclusion, introspection and prayer. The landscape is sometimes called “Christian Tibet”.

I was there to learn from their different lifestyle. I was young and searched for answers to the big questions.

After some time among them I noticed that lived a very repetitious life. Everything was done over and over and again and again. They had fixed habits for everything. As I was walking in the landscape I became friends with an eremite. One time I asked him about all those habits.

”You do everything over and over. Do you not find it boring always having to do the same thing all the time?” I asked him.

Sven, he told me,

”What you are noticing is my worldly life. You do not see my inner life. What I live for is to talk with God. I therefore simplify my worldly life because that gives me more time for God and that is what is important.”

I am an atheist. My passion is to find out about life. That eremite thought me something of value. Since then I have simplified my worldly life just like the monks on Athos. That gives me time to think about the mystery of life and each time I find out something it gives my life meaning.

Many humans are bored, their minds are fettered by worldly things. That prevents them living a meaningful life. Free animals are not bored nor should humans be.

One of my zeal’s is for the good boat. I also try to find answers to the big questions. That way of life is not for everyone. A meaningful life can also be found by gardening or painting or writing or like a bird just sitting on a twig singing all day. The main thing is to be interested in something and not caring to much about consuming.

Yukio Mishima expressed it well when he was asked how come he always delivered his manuscript so punctual. He answered: My fellow authors live bohemian lifes but their minds are bureaucratical. I live a punctual life that way I can write original books

If you like to be a free spirit, live a life of simple habits.

In the sixties people lived simpler lives, boats were smaller and people were not less happy.

One hundred years ago life was even simpler, there was no electricity and people were not less happy.

1845 Thoreaux built a cabin near Walden Pound and lived a meaningful life.

1750 Rosseau urged people to go back to nature.

For thousands of years wise men have urged people to live simple meaningful life’s. Building a small boat and sailing the huge oceans is one of many way’s of doing it.

SUSTAINABILITY – SIMPLE HABITS SIMPLE BOATS

Carl Sagan died 24 years ago, rejoining the cosmos. Whenever I crack open one of his books today, I get this feeling that he’s still here, his love and passion reaching out to me—a random human being alive in the year 2020—across time and space. How could that be?

Books are magical.

“The Cosmos is all that is or ever was or ever will be. Our feeblest contemplations of the Cosmos stir us — there is a tingling in the spine, a catch in the voice, a faint sensation, as if a distant memory, of falling from a height. We know we are approaching the greatest of mysteries.”

I am, as you are, a beneficiary of all the books ever written.

I cannot imagine a world where there is nothing equivalent to words or books. On the worst days of my life I would have no saving grace, no life boat. I know it sounds like an exaggeration, but I was saved, in many ways, by other people’s writing — all of them strangers, most of them already dead. (And not just saved, but rebuilt from broken pieces.)

My love for life led me to books, but books further fuelled this love. No one could read Jack Kerouac and continue to be placid or neutral about life:

“Happy. Just in my swim shorts, barefooted, wild-haired, in the red fire dark, singing, swigging wine, spitting, jumping, running — that’s the way to live. All alone and free in the soft sands of the beach by the sigh of the sea out there, with the Ma-Wink fallopian virgin warm stars reflecting on the outer channel fluid belly waters.”

No one could read a Charles Bukowski poem and not burn with life or fall in love with the idea of falling in love:

“your life is your life

don’t let it be clubbed into dank submission.

be on the watch.

there are ways out.

there is light somewhere.

it may not be much light but

it beats the darkness.

be on the watch.

the gods will offer you chances.

know them.

take them.

you can’t beat death but

you can beat death in life, sometimes.

and the more often you learn to do it,

the more light there will be.

your life is your life.

know it while you have it.

you are marvelous

the gods wait to delight

in you.”

And very few of us are left untouched or unmoved by the genius of Shakespeare:

“She should have died hereafter;

There would have been a time for such a word.

To-morrow, and to-morrow, and to-morrow,

Creeps in this petty pace from day to day

To the last syllable of recorded time,

And all our yesterdays have lighted fools

The way to dusty death. Out, out, brief candle!

Life’s but a walking shadow, a poor player

That struts and frets his hour upon the stage

And then is heard no more: it is a tale

Told by an idiot, full of sound and fury,

Signifying nothing.”

Life’s but a walking shadow, told by an idiot, full of sound and fury.

Wow.

I remember how, in the depths of my depression, walking around with Richard Russo’s “Empire Falls”, feeling strangely comforted by the flowing rhythm of Russo’s writing and the sorry tale of Miles Roby. This was the story of a man who ran a diner in a blue-collar American town full of abandoned mills, a setting far away from the circumstances of my own life, but here, for the first time as a 20-year-old, I learned of the river as a metaphor for life:

“Lives are rivers. We imagine we can direct their paths, though in the end there’s but one destination, and we end up being true to ourselves only because we have no choice.”

Books chart the tender and violent movements of the human heart, and remind us that our individual condition is also a universal condition, by virtue of our human-ness. We might not want to admit it, but we are all connected, mirrors and fragments of each other—lost bits floating around the universe—waiting for our final reunion.

“After all, what was the whole wide world but a place for people to yearn for their hearts’ impossible desires, for those desires to become entrenched in defiance of logic, plausibility, and even the passage of time, as eternal as polished marble?”

Lastly I end with this quote by Carl Sagan, who knows, as much as I do, about the sheer magic of books:

“A book is made from a tree. It is an assemblage of flat, flexible parts imprinted with dark pigmented squiggles. One glance at it and you hear the voice of another person, perhaps someone dead for thousands of years.

Across the millennia, the author is speaking, clearly and silently, inside your head, directly to you. Writing is perhaps the greatest of human inventions, binding together people, citizens of distant epochs, who never knew one another. Books break the shackles of time ― proof that humans can work magic.”

By Leo Babauta

The clients I work with almost all put incredible expectations on themselves — they have higher standards than almost anybody I know. It’s why they work with me.

It can be hard to see, but the expectations they’ve set for themselves often stand in the way of what they want the most.

It’s hard to see, because they became successful because of those expectations. It’s what got them this far.

But after a certain point, the expectations become the anchor, not the engine.

The breakthrough to the next level for many of us who perform at high levels — and actually for people of all kinds — is to let go of all expectations.

Tony Robbins is famous for saying, “Turn your expectations … into appreciation.” It’s a beautiful saying, and helps us to start to see where expectations are getting in the way.

Let’s take a look.

Expectations Often Only Seem to Help

I know lots of people who improved their lives because they had an expectation that they should be better.

“I should be in better shape. I should have a better job. I should be more productive. I should be more discipined. I should be more mindful. I should eat healthier.”

I know these expectations well — that was me at the start of my journey. It’s how almost all of us start out.

We take these expectations and turn them into action. “OK, it’s finally time to get off my butt and do something about this problem!”

And that’s when change starts to happen — when we’ve motivated ourselves to start.

So expectations can seem like they’re doing a lot of work, because they’re the things that got us to start.

But then they start getting in the way:

- I expected to be great at this habit after a few days, but a week into it and I still suck at it

- I expected to be perfect at this habit but I’m still struggling

- I expected to keep my streak going past 2 weeks but then I missed a day

- I expected to really enjoy yoga or meditation but it’s way harder than I thought

- This doesn’t meet my expectations, so it sucks (can’t appreciate it)

- I’m so focused on how I want things to turn out (expectations) that I miss the beauty of what’s happening in this moment

And so on.

The expectations actually hold us back from the simplicity of discipline.

The Simplicity of Discipline

The things we want to be disciplined at are actually fairly simple in a lot of ways.

We want to be consistent with the journaling habit, or meditation, or exercise? Just start, as simply as possible. Do that again the next day. If you miss a day, no problem — just start again. Over and over.

All of the problems of habits start to go away when we drop expectations. We can start to appreciate doing the habit, in this moment, instead of being so concerned with how it will turn out in the future.

It’s very simple, when we drop the expectations.

A daily writing habit becomes as simple as picking up the writing tool and doing it, without any expectation that it be any good or that people love it.

A daily exercise habit becomes as simple as putting your shoes on, going outside, and going for a walk or a run or a hike or a bodyweight workout. You don’t need fancy equipment, the perfect program, or a membership to anything. You just start moving, as simply as possible.

Of course, we have all kinds of hangups when it comes to exercise, or writing, or eating. These come from years of beating ourselves up (or getting judged by others, and internalizing those judgments). We can stop beating ourselves up the moment we drop expectations. Then, without the layers of self-judgment, we can simply get moving.

Every time we “fail” at a habit, we get discouraged. Because of expectations. What if we dropped any expectation that we be perfect at it, and just return to doing the habit at the earliest opportunity? Over and over again.

It all becomes exceedingly simple, once we can drop expectations. And if we become fully present, it can even be joyful! The joy of being in the moment, doing something meaningful.

Dropping the Expectations

So simple right? Now we just have to figure out how to drop those pesky expectations.

Here’s the thing: it turns out the human mind is a powerful expectations generator. Like all the time, it’s creating expectations. Just willy nilly, without any real grounding in reality. Out of thin air.

So do we just turn off the expectations machine? Good luck. I’ve never seen anyone do that. In fact, the hope that we can just turn off the expectations is in itself an expectation.

The practice is to just notice the expectations. Bring a gentle awareness to them. Just say, “Aha! I see you, Expectation. I know you’re the reason I’m feeling discouraged, overwhelmed, behind, frustrated, inadequate.”

And it’s true, isn’t it? We feel inadequate because we have some expectation that we be more than this. We feel behind because of some made up expectations of what we should have done already. We feel discouraged because we haven’t met some expectation. We feel overwhelmed because we have an expectation that we should be able to handle all of this easily and at once. We feel frustrated because someone (us, or someone else) has failed to meet an expectation.

All of these feelings are clear-cut signs that we have an expectation. And we can simply bring awareness to the expectation.

Then we’re in a place of choice. Do I want to hold myself and everything else to this made-up ideal? Or can I let go of that and simply see things as they are? Simply do the next step.

Seeing things as they are, without expectations, is seeing the bare experience, the actual physical reality of things, without all of the ideals and fantasies and frustrations we layer on top of reality.

This means that when we miss a day, we don’t have to get caught up in thoughts about how that sucks — we just look at the moment we’re in, and sit down on the meditation cushion. Break out the writing pad. Do the next thing, with clear eyes.

So in this place of choice, we can decide whether we want to stay in this fantasy world of expectations … or drop out of it into the world as it is. Which is wide open. Ready for us to go do the next thing.

That’s the choice we can make, every time, if we are aware of our expectations in the moment.

Two Simple Discipline Practices

Let’s talk briefly about two practices: the discipline of doing work, and the discipline of sticking consistently to a habit.

Discipline of Doing Work: So let’s say you have a task list, with 5 important tasks, and 10 smaller ones (including respond to Tanya’s email, buy a replacement faucet for the kitchen sink, etc.).

What would stand in the way of doing all of that? Not being clear on what to do first (or the expectation that you pick the “right” task), feeling resistance to doing it (expectation that work be comfortable), worried about how it will turn out (expectation that people think you’re awesome), stressed about all the things you have to do today (expectation that you have a calm, orderly, simple day), wanting to run to your favorite distractions (expectation that things be easy).

So noticing these difficulties caused by expectations … you can decide if you want to be in this place of expectations, or if you’d like to drop them and just be in the moment as it is.

Then you do the simple discipline of work:

- Pick one task. Whatever feels important right now. Let go of expectations that it be the right task.

- Put everything else aside — other tasks, distractions. Let go of the expectation that you do everything right now, and that what you do should be easy and comfortable.

- Do the task. Be in the moment with it. Let go of expectations of comfort, or expectations that you succeed at this and that others not judge you. Just do. Find the joy of doing.

- Stay with it as long as you can. If you get interrupted, simply come back.

- When you’re done, or it’s time to move on, pick something else. Let go of expectations that you have everything done right away, and just pick one thing to do next.

And repeat.

It’s important to make a distinction — between letting go of the expectation that you not be tired, and overworking yourself. We are not advocating overworking yourself to burnout. But that doesn’t mean we should never do anything when we’re not feeling it. We have to let go of the expectation that we not be tired when we work … and also the expectation that we never stop working. Rest when you need it, but don’t let yourself off the hook just because you don’t feel like it.

Discipline of Consistent Habits: Let’s say you want to get more consistent with habits. You pick one — journaling, for example.

What would get in the way of consistency with this habit? Not making space for it in your day (expecting things to come easy without fully committing to it), not enjoying the habit (expecting things to be comfortable and fun), not doing as well as you hoped and getting discouraged (expectations that you’ll be great at it), missing some days and getting discouraged (expectation that you be perfectly consistent), resisting doing it when you have other things to do (expectation that you don’t have to sacrifice something you want to do this habit).

So noticing these difficulties caused by expectations … you can decide if you want to be in this place of expectations, or if you’d like to drop them and just be in the moment as it is.

Then you do the simple discipline of this habit:

- Carve out space. Commit yourself to doing this habit in that space.

- Do the habit. Notice if you’re feeling resistance, and just do it.

- You might even appreciate the habit as you do it, if you let go of how you think it should be. You might find the joy of doing it as well.

- Do it the next day, and the next day.

- If you miss a day, simply start again, letting go of expectations about yourself.

If you’re struggling with feeling tired and not wanting to do something, this is because of an expectation that you not be tired, and not have to do things when you don’t feel like it. Letting go of that, you can simply do the task or habit.

You’ll notice that none of this says that doing the task or habit will be easy, comfortable, or without fear or tiredness or uncertainty. That would be an expectation. In fact, there’s a good chance that these will be present for you in the moment, as you do the task or habit. That’s OK — we’re not going to expect it to be any different than it is.

So then, letting go of that, we simply turn to what’s in the moment, and get on with it.